The pace of AI innovation is relentless. New models, tools, and capabilities seem to appear every week. But beyond the noise, a more fundamental shift is underway.

Organizations are moving from AI assistants to systems of AI agents.

While assistants help individuals work faster, agents are designed to act—executing tasks, coordinating workflows, and driving outcomes. Unlocking real value from this shift doesn’t come from adding more prompts or bigger models. It comes from intentional architecture. In the agentic era, how agents are designed, scoped, and connected determines whether they become reliable automation—or unpredictable experiments.

What Is an AI Agent?

At its core, an AI agent is a system that can take action on your behalf to achieve a goal, not just generate responses.

Early AI experiences were largely conversational: answering questions, summarizing content, or generating text. Agents extend this model by acting in the world—sending an email, creating a pull request, triggering a workflow, or executing a deployment.

This shift became tangible as coding agents entered IDEs in late 2024 and accelerated further with the introduction of tools like the GitHub Copilot Coding Agent in early 2025. But agents on their own are only part of the story. When combined with existing DevOps automation, they dramatically expand what’s possible across the software development lifecycle.

Why Architecture Matters in Agentic Systems

As organizations move beyond basic AI adoption, a new challenge emerges: how do you design agents that are consistent, predictable, and production‑ready?

This is where agentic architectures come into play. When teams begin building systems of agents, a small set of architectural patterns consistently emerges. These patterns determine whether agents become useful automation—or unreliable new technology.

The four most common patterns are:

- Single agents

- Parallel agents

- Sequential agents

- Self-healing agents

To make these patterns tangible, let’s use a simple example: planning a trip.

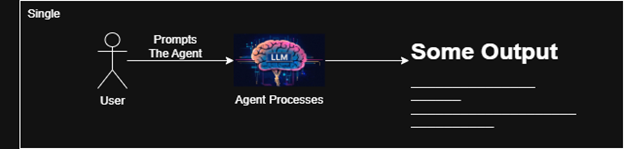

Single Agents: Focused Tasks, Predictable Outcomes

Imagine asking an agent to plan an entire trip to Paris—flights, hotels, and restaurant reservations—based on a single prompt. You might get a reasonable itinerary, but if you run the same prompt multiple times, the results can vary significantly.

Adding more context helps, but it doesn’t guarantee consistency. This is why effective agents are scoped to specific tasks with predictable outcomes.

A single agent should do one thing well. Instead of one agent handling everything, you break the problem into smaller, specialized agents. This decomposition improves reliability and makes behavior easier to reason about – especially in automated systems.

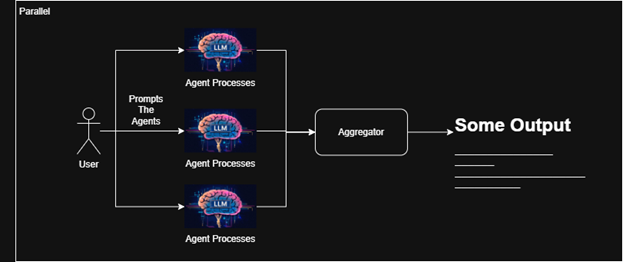

Parallel Agents: Speed Through Decomposition

Parallel agents extend this idea by running multiple single agents at the same time, each with a different goal.

In our travel example, a single prompt like “Plan a trip to Paris in August” could be routed to three agents working in parallel:

- One finds flight options

- One searches for hotels

- One identifies restaurant reservations

Each agent produces an output, and those outputs are aggregated into a cohesive plan. This approach is faster and more predictable than a single agent attempting everything at once.

In DevOps environments, this pattern is commonly used to run agents for testing, security scanning, and code analysis simultaneously, reducing overall cycle time.

However, parallelism introduces a limitation: the agents don’t influence each other’s decisions.

In our example, the hotel agent might find a great option in northern Paris, while the restaurant agent looks for reservations across the city, because it has no context about where you’re staying.

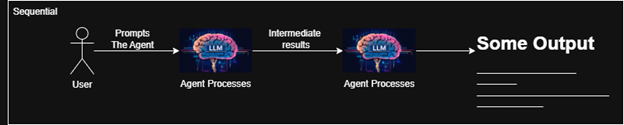

Sequential Agents: Decisions That Build on Context

Sequential agents solve this problem by allowing the output of one agent to become the input of the next.

Now, the hotel booking agent runs first. Once it confirms a booking and produces an address, that output is passed to the restaurant reservation agent. With this added context, the reservation agent can prioritize restaurants within walking distance of the hotel.

You can still run other agents, like flight booking, in parallel, while sequencing only the tasks that depend on prior decisions. This pattern mirrors how many DevOps pipelines work today, where later stages rely on validated outputs from earlier ones.

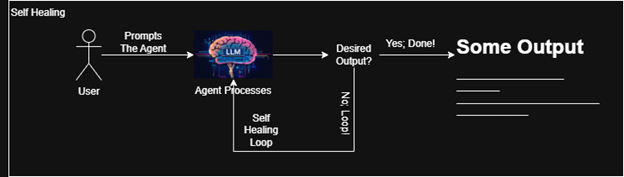

Self-Healing Agents: Designing for Failure

Even with good sequencing, failures happen.

Suppose the restaurant reservation agent identifies a restaurant but can’t book it because the reservation platform doesn’t list it. In many systems, that failure would halt execution. But production-grade agentic systems need to be more resilient.

This is where self-healing agents come in.

By validating outputs programmatically, agents can detect failures and trigger retries or alternative paths. If a reservation attempt fails, the agent can loop back, find another option, and try again.

Of course, self-healing doesn’t mean infinite retries. Guardrails are essential; retry limits, fallback logic, and clear escalation paths to humans when needed. This pattern becomes critical in production systems, where failures are expected and workflows must continue safely and predictably.

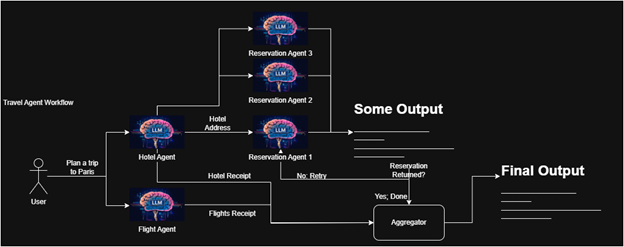

Combining Patterns: Systems of Agents in Practice

In reality, effective agentic systems combine all of these patterns.

Complex workflows are built from many focused agents, orchestrated together to achieve a shared goal. While this may feel excessive for planning a single trip, the value becomes obvious when the same workflow is reused at scale—such as a travel agency planning trips for hundreds of clients.

The same principle applies in software development:

- PR review agents validating code quality and updating documentation in parallel

- Migration agents modernizing legacy systems through sequential steps

- Security alerts triggering agents that remediate vulnerabilities until resolved using self-healing loops

The Future of Agentic DevOps

This example is simplified, but the implications are real.

As organizations adopt AI, the challenge is no longer just using agents. It’s designing systems of agents that are reliable, secure, and scalable. The future of DevOps isn’t powered by a single assistant or a one-size-fits-all agent. It’s driven by well-architected agentic systems working together.

The future is already here – and it’s agentic.