Across industries, leadership teams are feeling pressure to adopt AI tools, launch pilots, and demonstrate progress. In many cases, that momentum is driven less by a clear strategic intent and more by the sense that standing still is no longer an option.

What often gets lost in that urgency is alignment. AI introduces new capabilities, but it also exposes existing assumptions about how work gets done, how value is created, and how behavior is shaped inside an organization. As a result, many AI initiatives struggle because organizations move forward without fully understanding their starting point, their long-term intent, or the systems that reinforce day-to-day behavior.

In practice, AI strategy is less about selecting the right tools and more about asking the right questions early.

- Questions that surface hidden risk and clarify whether AI is being used to optimize existing work or enable something fundamentally new.

- Questions that force leaders to examine whether incentives, performance expectations, and governance structures are evolving alongside their AI ambitions.

This blog outlines three AI strategy questions that leadership teams should spend time sitting with before scaling adoption. These questions are not technical in nature. They are organizational, behavioral, and strategic by design. When explored thoughtfully, they help create the conditions for sustainable AI value, rather than short-term activity that never fully compounds.

1. Where Are the “Known/Unknown Areas” of AI Usage, and How Would We Know?

AI is already present in many organizations’ day-to-day work, often in ways leadership teams cannot fully see. Employees bring their own tools, experiment independently, and incorporate AI into workflows long before formal strategies or policies are established.

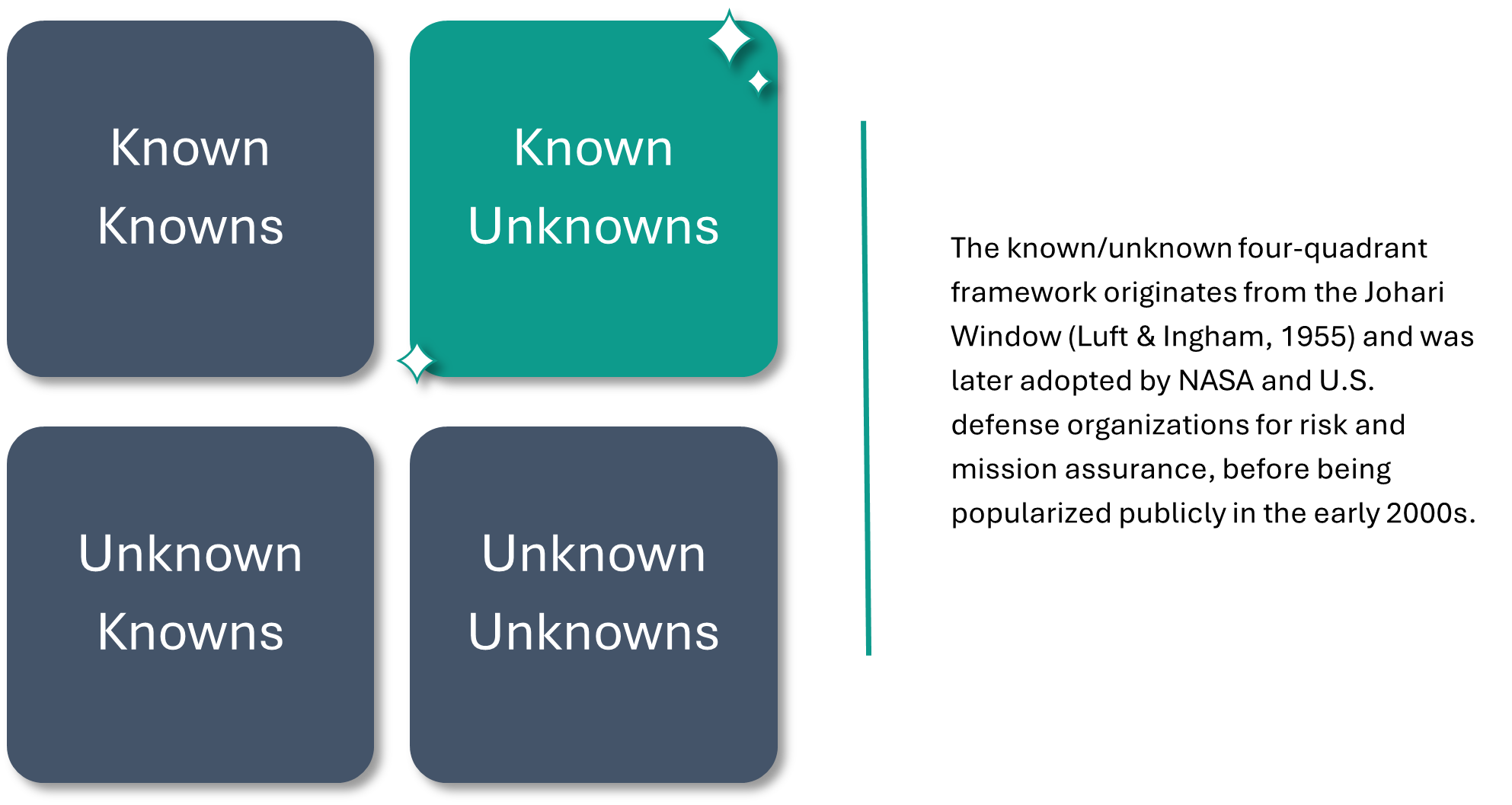

This creates a gap between assumed usage and actual usage. Leaders may know AI is being used, but not where, how often, or for what purpose. This dynamic can be framed as a known unknown problem: organizations are aware that AI activity exists, yet lack visibility into its scope, risk, and impact.

From a strategy perspective, this is not simply a governance issue or an IT concern. It is an organizational risk rooted in limited observability. As a result, leadership teams may be making decisions without a clear picture of how AI is already influencing:

- Security and data protection

- Compliance and risk exposure

- Workforce planning and role design

- Day-to-day decision-making across teams

The challenge is compounded by the reality that personal AI tools are increasingly accessible and powerful. Absent clear guidance, employees often default to whatever helps them move faster in the moment. Over time, this can introduce unintended exposure, whether through data leakage, inconsistent decision-making, or the quiet normalization of shadow AI practices that operate outside established controls.

Rather than attempting to eliminate this behavior through restriction alone, a more effective approach is to acknowledge it and respond deliberately. In practice, this means starting with discovery: understanding where AI usage is emerging, what problems employees are trying to solve, and where unmanaged risk may exist. From there, organizations can introduce trusted, enterprise-grade AI solutions with clearly defined guardrails, usage expectations, and training.

When handled well, this question becomes an inflection point. It allows leadership teams to move from uncertainty to informed action by replacing ambiguity with intentional design. More importantly, it establishes a foundation for everything that follows. Without clarity on current AI usage, it becomes difficult to plan responsibly, measure value, or scale adoption in a way that aligns with broader organizational goals.

Key takeaway:

Before AI strategy can move forward, leaders need visibility into how AI is already showing up, intentionally or otherwise, inside the organization.

2. How Are We Thinking About Long-Term AI Planning Through Red Ocean and Blue Ocean Lenses?

Once organizations gain clearer visibility into how AI is already being used, the next challenge becomes planning with intent. This is where many AI strategies begin to diverge, driven by fundamentally different views on what AI is meant to accomplish over time.

A helpful way to frame this planning conversation is through the lens of red ocean and blue ocean strategy.

Red ocean [1] thinking focuses on competition and efficiency. In the context of AI, this often shows up as efforts to automate existing processes, reduce cycle times, lower costs, or keep pace with competitors adopting similar tools. These initiatives are practical and, in many cases, necessary. They help organizations stabilize operations, improve productivity, and avoid falling behind.

However, red ocean AI strategies tend to concentrate on optimizing today’s work rather than reshaping tomorrow’s possibilities. When AI is viewed primarily as an efficiency lever, planning conversations stay narrowly focused on incremental gains such as faster workflows, reduced manual effort, or marginal improvements in output.

Blue ocean [2] thinking pushes the conversation further. Instead of asking how AI can make existing work more efficient, it asks what becomes possible once AI changes the constraints of that work altogether. This might include new ways of engaging customers, new services or offerings, or new expectations for how employees spend their time and apply their expertise.

In practice, the distinction between these approaches becomes clearer when viewed side by side.

Red ocean AI planning typically emphasizes:

- Automating and optimizing existing processes

- Reducing costs and cycle times

- Matching or maintaining parity with competitors

Blue ocean AI planning focuses on:

- Creating new value and differentiation

- Rethinking roles, services, and customer engagement

- Deliberately reinvesting freed capacity into higher-value work

This distinction can be surfaced through a simple but powerful question: If AI meaningfully reduces the time employees spend on routine tasks, what do we want them to do with the capacity that is created? Without an intentional answer, organizations risk reinvesting those gains back into the same patterns, more meetings, more volume, more activity, rather than channeling them toward differentiation or growth.

Most organizations will ultimately need a balance of both approaches. Red ocean strategies ensure operational stability and competitiveness. Blue ocean strategies create space for innovation and long-term value creation. The challenge is that many AI plans emphasize the former while only signaling the latter, leaving teams unclear on priorities and expectations.

By explicitly sitting with this question, leadership teams can clarify where efficiency ends, and innovation begins. More importantly, they can align AI investments with a longer-term vision, one that moves beyond pilots and productivity metrics toward a more deliberate view of how AI reshapes roles, work, and value creation across the organization.

Key takeaway:

AI planning requires more than efficiency targets; it requires clear intent about how freed capacity will be reinvested to create future value.

3. How Should Our Reward Systems Evolve to Align with Our AI Ambitions?

As organizations move from experimentation to broader AI adoption, a critical question often goes unaddressed: how do existing reward systems reinforce, or undermine, the behaviors leaders are trying to encourage?

Organizations frequently signal ambition around AI. They invest in tools, encourage experimentation, and talk openly about becoming more data-driven or AI-enabled. At the same time, many of the structures that shape day-to-day behavior remain unchanged. Performance expectations, incentives, and evaluation criteria continue to reward speed, volume, and familiarity, often at odds with the thoughtful use of new capabilities.

This dynamic reflects a long-standing organizational challenge often described as the rewarding A while hoping for B (see Steven Kerr’s 1975 classic work, still relevant more than 50 years later [3]. In the context of AI, it emerges when organizations expect employees to adopt new tools, rethink how work gets done, or take calculated risks, without adjusting how success is measured or recognized.

When reward systems lag behind AI strategy, adoption tends to stall in subtle but predictable ways. Employees may:

- Use AI inconsistently or only when required

- Limit experimentation to avoid perceived risk

- Revert to familiar workflows that feel safer and more predictable

In some cases, AI usage becomes performative rather than purposeful, used just enough to signal compliance, but not enough to meaningfully change outcomes.

The challenge becomes even more complex as AI capabilities mature. As AI begins to supplement not just routine tasks but higher-order work such as analysis, judgment, and decision-making, organizations must confront difficult questions:

- Is AI usage optional or expected?

- How is responsible use encouraged and reinforced?

- What happens when some employees adopt AI quickly while others opt out?

Addressing these questions requires more than policy. It requires intentional alignment between stated ambitions and the systems that guide behavior. Some organizations are beginning to explore this shift by linking AI usage to performance frameworks, signaling that AI is not an add-on, but part of how work is expected to be done. Others take a more incremental approach, using incentives to encourage experimentation and learning before formal expectations are set.

Regardless of approach, the underlying principle remains the same: people respond to what is rewarded. When incentives and expectations align with AI strategy, adoption becomes clearer, more consistent, and more sustainable. When they do not, even well-intentioned AI initiatives struggle to deliver lasting value.

Key takeaway:

AI transformation becomes real when reward systems reinforce the behaviors organizations want to see, not when ambition is stated, but when it is structurally supported.

____________________________________________

1 Kim, W. C., & Mauborgne, R. (2015). Red ocean traps. Harvard Business Review.

2 Kim, W. C., & Mauborgne, R. (2004). Blue ocean strategy. Harvard Business Review.

3 Kerr, S. (1975). On the folly of rewarding A, while hoping for B. Academy of Management Journal.